I will start by clarifying that it will be difficult for me to explain in words everything I want to say about Core Web Vitals or Google PageSpeed in this post. Core Web Vitals (or, what is the same, Google PageSpeed or Google Lighthouse) is a hot topic since mid-2020 since Google has given enough hype to the matter, and each person says something different.

As a result, there is a lot of information on the Internet on this subject, but there is a lot of noise, and many speak “from hearsay”. Here I am going to speak with the foundation since I have been researching this topic for around six months.

I have always been very against Google PageSpeed and all the tools that get a pointless score since they are always not very objective in terms of loading speed. On the contrary, loading times in seconds don’t lie.

I’m not going to mess around anymore. I’m going to try to explain a little the history of Google PageSpeed and also how to get a good score from the point of view of logic and truth.

What is Google PageSpeed today?

I’m going to explain what Google PageSpeed is right now, not to mention the past since Google PageSpeed has been many things over time (and I’m not exaggerating).

Currently, Google PageSpeed is the visible part, the tool; Specifically, it is called Google PageSpeed Insights. It is the tool that offers you the score and some tips or good practices to improve the “loading speed” and the user experience.

But behind this, we have the Google Lighthouse algorithm, which is the engine that drives Google PageSpeed Insights. Currently, Google Lighthouse is integrated into all Google Chrome. Also, many tools (such as GTMetrix) use this implementation to perform their own Google PageSpeed tests:

On the other hand, we have Core Web Vitals, which have gained a lot of fame in 2020 and use the Google Lighthouse algorithm, but only some metrics to calculate and give scores.

Core Web Vitals is a Google “initiative” that uses data from Chrome UX Report to show the average of the metrics taken directly from real users, which, as we will see in the following sections, can be counterproductive and cause quite a few problems.

So, knowing this, we are going to answer the following questions.

What is Google PageSpeed Insights?

Initially, Google PageSpeed was the tool, the algorithm and everything, but today Google PageSpeed Insights is simply the interface and tool where “normal” users draw their conclusions (which are usually wrong) and become paranoid.

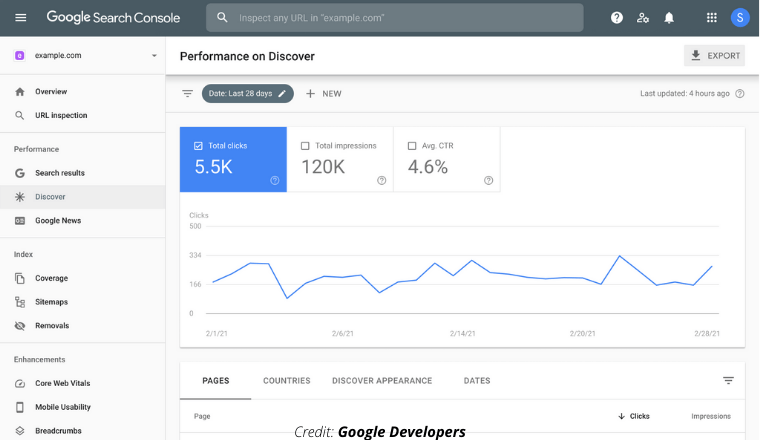

Google PageSpeed displays mixed live Google Lighthouse data, and Core Web Vitals data pulled directly from Chrome UX Report.

It is a fairly complete tool, except that it has little documentation (most of the documentation is from Lighthouse and Core Web Vitals), and most people do not know how to interpret the information provided correctly.

What is Google Lighthouse?

We can say that Google Lighthouse, currently, is the algorithm behind Google PageSpeed Insights.

Google Lighthouse not only takes metrics related to loading speed, but most of the metrics of this algorithm are for UX and good practices in web development.

Any Google Chrome or Chromium-based browsers can measure Google Lighthouse, as they carry the implementation at the core.

The data stored in the Chrome UX Report database is extracted with Google Lighthouse, selecting some of its metrics. The data that we can see in Google Search Console in Core Web Vitals is based on the Lighthouse data stored.

What is Core Web Vitals?

According to Google, Core Web Vitals is going to be a ranking factor in 2021. It is an average obtained through Chrome UX Report with some selected metrics from Google Lighthouse.

The metrics used to get results from Core Web Vitals have changed over time, but they are the LCP, FID, and CLS at the time of this writing.

There are some additional metrics. For example, the FCP encompasses the LCP, the Speed Index is a joint summary of the results of all the metrics, and there are some more that we will explain shortly.

We will also explain the problems with removing Core Web Vitals from the Chrome UX Report and the issues that exist to measure the performance of real users.

What is the relationship between PageSpeed and SEO?

Well, really, none. Although many have attributed miraculous properties to the Google PageSpeed score, the truth is that it does not. Typically, what makes the difference in SEO in terms of loading speed is indexing and crawling.

So far, I have only seen a website with bad PageSpeed that lost positions in the SERPs and had huge indexing problems related to JS saturation during crawling. Obviously, solving these problems improves the score and, at the same time, the Google bot can correctly crawl the website.

The tool that Google has given is Google Search Console to control indexing and crawling. It is a fundamental tool to see if there are problems: it is much more helpful than all the information offered in Google PageSpeed Insights. Most people who complain about poor PageSpeed ratings have never looked at the data provided by Google Search Console’s “Crawling Statistics” tool.

Could Google PageSpeed or Core Web Vitals score influence SEO in the future? Well, we move on to the next section.

Read more: Best SEO Tools to Rank On Google 2021 (Ultimate Guide)

Does Core Web Vitals influence SEO?

The answer is exactly the same as above, but with a few nuances.

Google has commented that, throughout 2021, some changes will be implemented in the search engine algorithm so that those scores affect the SERPs as one more positioning factor. Honestly, I don’t think that it is possible if the impact of certain technical aspects on the scoring is not relaxed a bit.

On the one hand, it is tough to assume and carry out specific adaptations without affecting business decisions. On the other, penalizing based on actual user load scores can even be “classist” depending on the market and the country to which we target our websites.

Not everything is black or white; there are intermediate points in the balance, which is precisely what I think is missing right now. This has led to many website administrators who have become obsessed with the results of Core Web Vitals in Google Search Console.

PageSpeed and Core Web Vitals = WPO?

NOT. Neither Google PageSpeed nor Core Web Vitals nor Google Lighthouse is synonymous with “loading speed” or WPO.

WPO is a concept created in 2004 in the US, and that encompasses good practices aimed at improving the loading speed of a website, but any of the Google services mentioned above are 80% good practices for UX and charging speed in the remaining 20%.

Google PageSpeed and Core Web Vitals have gone down the UX path, with metrics like LCP and CLS that are really user-oriented and not web performance-oriented, although they can cover other technical aspects.

For more or less two years, Google PageSpeed (and the entire stack) has been looking for users to prioritize loading the elements at the top of the page. It is not actual loading speed but visual posture.

The WPO goes much further: it is about optimizing a website so that its load is flawless and like a Swiss watch, consuming the least possible resources and being efficient.

Is Google PageSpeed 100/100 worth searching?

Leaving aside Core Web Vitals, we will talk about 100 out of 100 in Google PageSpeed.

Many people want to achieve 100 out of 100 in Google PageSpeed, but they do not know what it implies, what they need or, in many cases, it is not feasible without rethinking the web project and starting from scratch.

Keep in mind that, in 99.9% of cases, to get 100 out of 100, it is necessary to develop the web from scratch and making a VERY HIGH development investment. On the other hand, it will also be essential to sacrifice marketing tools that, at the business level, are usually vital for a company. Forget Google Analytics, marketing automation tools, the Facebook pixel and Google Ads, etc.

100 out of 100 on PageSpeed is difficult, if not impossible, to achieve in practice. If a website is not simply a prototype and we want it to sell or monetize correctly, it is challenging to achieve that score without losing sales or the possibility of optimizing them.

Improve Google PageSpeed score

There is no exact method to improve your Google PageSpeed score. We simply have to improve the results on some metrics.

Most metrics are time-based, while others (like CLS) are algorithm-based.

Next, I will try to explain all the metrics that are currently involved in Google PageSpeed, Lighthouse, Core Web Vitals and the relationship among them.

You must bear in mind that, to improve the scores, you must fulfil certain things that you can see in the Google PageSpeed Insights interface:

This may sound easy, but you will need technical knowledge in 90% of cases. And in the remaining 10%, it is not that you need technical knowledge. It is that you need either a miracle or directly a sacrifice, which is exactly what we explained above.

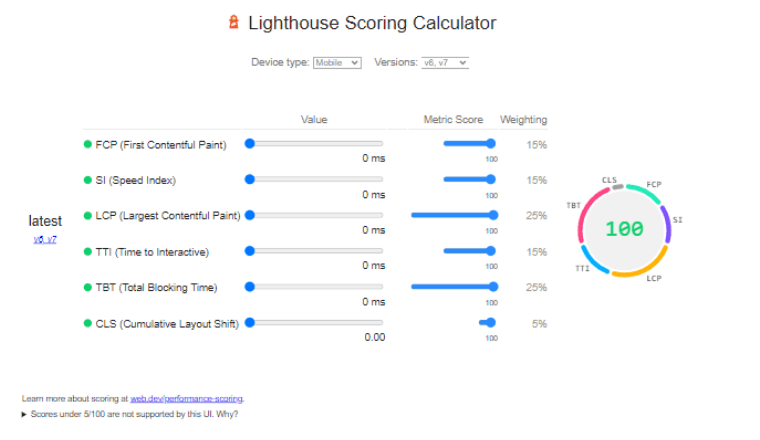

The Google Lighthouse algorithm is public, along with the percentages and weight for each metric: https://googlechrome.github.io/lighthouse/scorecalc/

The Lighthouse algorithm is being updated, and in the different versions, the percentages and weights are changing, making an issue. Well, I don’t mess around anymore. I’m going to explain some Google PageSpeed / Google Lighthouse and Core Web Vitals metrics, along with how to improve them.

Google PageSpeed: FCP

Let’s start with one of the most important metrics and one of the most difficult to improve.

The FCP (First Contentful Paint) weighs 15% in the total score and usually lowers the score the most since the CSS, and complex JS usually damage the FCP a lot.

The FCP measures the time it takes to load the top part of the web: the “Above the fold” or the visible part at the time of loading.

To improve the FCP score in Google PageSpeed and Core Web Vitals, we can apply the following WPO techniques:

- Improve response times and TTFB, usually with a more efficient web server (LiteSpeed or Nginx) or implementing a caching system at the server level.

- Implement browser cache or caching system, although this only affects measurements on real users.

- Implement an agile and well-structured load using resource hints or resource preloading: preload, prefetch DNS, preconnnect, etc.

- Implement a CDN, mainly to speed up the loading of JS and CSS.

- Implement a web server or CDN with HTTP / 2 and HTTP / 3, since multiplexing allows speeding up sending files to the visitor’s browser.

- Complete auditing of external requests to third-party hosts and adjustments using asynchronous and delayed loading.

- Elimination of excess of JS and CSS, both through minification and conditional loading or directly elimination of resources. Although this may seem easy, it is one of the most difficult parts due to current trends in themes and web development in general.

In many cases, FCP problems come from an excess of JS and CSS or from certain libraries with many dependencies that cause loading problems.

We must be aware that if we need certain Javascript or CSS on our website, there are no miracles: either remove the functionality and with it the code, or do little else.

If we have a WordPress website with a complex multipurpose theme with a lot of CSS and JS and we want a good score on Google PageSpeed, we can apply all the WPO techniques listed above, but there will come a time when we will not be able to get more improvement without redoing the web completely.

Google PageSpeed: LCP

The LCP (Largest Contentful Paint) encompasses part of the FCP, the first part. The LCP weighs 25% in the total score, and what it measures is the time it takes to load the largest element in dimensions from the top of the web.

The largest element of the “Above the fold” can be an image or a CSS. The problem is that if Google detects that the element with the greatest dimension is a CSS, it can contain several pictures and be very time-consuming.

The total time is measured from when the web begins to load, all the JS and CSS locks until the largest element is loaded. For this reason, it includes the first part of the FCP.

In most cases, improving the FCP will also improve the LCP. Many people tend to try to fully adjust the element that Google detects as the LCP without considering all the crashes that occur before also count.

To improve the LCP, we have to fix the crashes and everything listed for the FCP since the FCP partly includes the LCP.

In addition to improving the FCP, the LCP is also improved by solving “Opportunities” such as “Remove resources blocking rendering.” This can be done by reducing the CSS and JS or trying to change the resource loading. We can use preconnect or preload to do a staggered load, also using asynchronous loading and delayed loading for the JS, but miracles do not exist, and, in some cases, in very overloaded sites, it may not be enough.

If you want more details, you can go down a bit more in this article and go to the “Opportunities” or “Diagnosis” part.

Google PageSpeed: CLS

The CLS (Cumulative Layout Shift) has a very low weight, 5%. It is not a “loading speed” metric but rather a UX metric that measures visual stability.

It is the only metric based on the algorithm and not on time, which means that it cannot be very objective. In practice, the CLS measurement fails like a fairground shotgun.

The CLS measures how elements move on the screen after the initial page load.

This can be avoided by marking the dimensions of all the elements in the HTML, taking care of their lazy loading, and avoiding the dynamic injection of new elements through JS after the initial loading of the page. In WordPress, WP Rocket allows you to easily mark the dimensions in the code if our theme does not.

The CLS does not have any more secrets. Only with the above, we could already fulfill it. The problem is that in many cases, as I said, the measurement is not exact and can give us a bad score even if we don’t really have any problems on the web.

Google PageSpeed: FID

The FID (First Input Delay) is not usually a very named metric because it is very easy to meet. If our website limps at this point by chance, implementing an instant click library like an instant.page would be solved.

The FID measures are the time it takes for the web to become interactive again for the user after clicking on a link or button.

The FID is measured in Core Web Vitals with real user data collected within the Chrome UX Report, but it is not included in Lighthouse directly.

Usually, if we have problems with the other metrics, we will also have them with the FID, especially with the TTI and TBT, the two blocking metrics highly influenced by JS and complex CSS.

Google PageSpeed: Time To Interactive

The TTI (Time To Interactive) measures the time it takes for the web to be interactive for the user after loading. It can only be seen in Google PageSpeed Insights and Google Lighthouse, but not in Core Web Vitals.

There is no exact way to improve TTI. It is simply about improving the main metrics and trying that the execution of the asynchronous Javascript does not extend too much in time or block the interaction for the user.

Google PageSpeed: Total Blocking Time

The TBT (Total Blocking Time) is a metric that adds the FCP and the TTI, but without reaching the Speed Index. The TBT measures the total lockout time. The crashes usually come when loading JS libraries and their dependencies and complex style sheets that require other CSS files.

It is a metric related to the FID but much more complex and artificial. It can be done by improving FCP and TTI, but we can have bad FCP and good TBT.

Google PageSpeed: Speed Index

Speed Index is a secondary metric, and although it appears in Google PageSpeed Insight, it is not part of Core Web Vitals.

With Speed Index, the time it takes for the web to be fully available to the user from the moment of loading is measured, although I also have to add that the Google PageSpeed Insight service measurement is not objective due to the location from which it does the measurement.

There is no direct way to improve the Speed Index. You simply have to improve the 3 previous metrics, especially the FCP, and try not to pass us with the asynchronous or delayed JS that could affect the interaction.

Read more: SEO For Youtube: Tricks To Rank Your Videos on Youtube in 2021

Google PageSpeed Insights opportunities

In Google PageSpeed Insights and Google Lighthouse, we have a section where they explain detailed “Opportunities” that we can use to improve the score and, in some cases, also to get a better load on devices with little processing power (JS).

The good thing is that they also teach us the specific files that we can touch to solve the problem in the opportunities.

I think I am going to miss some “Opportunities” in the list, but I will try to talk about most of them and how to solve them.

Remove resources blocking rendering

It’s one of the things that most often appears in Google PageSpeed and Google Lighthouse opportunities. It is about eliminating the locks in the load caused by dependencies or by some files that call others, both for JS and CSS, although the problem is usually in the complex JS.

With Resource Hints and asynchronous or delayed loading, we can achieve interesting things to improve this, but miracles do not exist.

With certain themes or page builders, such as Elementor, it is impossible to solve this opportunity and get 100 out of 100. The impact depends entirely on the complexity of the website in question.

Reduce initial server response time

It usually appears when the web has no cache, or the web server has some efficiency problem. The first time we do the test with Google PageSpeed Insights, we get this opportunity; if we have cache, the second no longer.

Remove unused Javascript resources

As the name suggests, this is seemingly easy to fix. The Google Lighthouse algorithm can theoretically detect when a JS or CSS resource is not use.

It may seem easy to fix, but in many cases, it is a false positive, and we have no way of telling Google that we actually do use it.

If JS is loaded in some parts of the web and not in others, we can use conditional loading (in the case of WP) to fine-tune the load.

Delete unused CSS files

Exactly the same applies to the CSS as to the Javascript: the Google Lighthouse algorithm used in Google PageSpeed Insights fails like a fairground shotgun in most cases and tends to give false positives that we cannot “contest”.

If that CSS is used only on one or more pages of the website, we can use conditional loading to fine-tune the loading.

Use an appropriate size for images

Google Lighthouse can detect if the images have dimensions (in pixels) too large for the gap where we are showing them, and they have to be resized by CSS.

It is usually easy to fix for most images: you have to configure a maximum width size for the largest layout where they will be displayed.

In WordPress, we can easily solve it with the Imsanity plugin, although we can also do it before uploading them to the website with a desktop application.

Postpone loading images that do not appear on the screen

This opportunity appears when we do not have lazy load implemented in the website or when the lazy load is not affecting some image.

The implementation of lazy load in WordPress is relatively easy, but in some cases, there can always be an image to which it does not apply (especially backgrounds), and it may be necessary to enter lazy load manually or with another method.

It is recommended to use native lazy load rather than via JS, as it is much more efficient, although it depends on the web browser’s compatibility.

Minify Javascript resources

As its name suggests, it simply asks us to minify the Javascript resources. The theory is simple, but in practice, almost no plugin or service can reach the levels of minification required by the Google Lighthouse algorithm.

Some Javascript resources, by minimizing them too much, may stop working.

Minify CSS files

Exactly the same as above: it is about minifying the CSS, but we may find that we cannot reach the levels required by Google.

In addition, if we keep minifying, we can damage the appearance of the website.

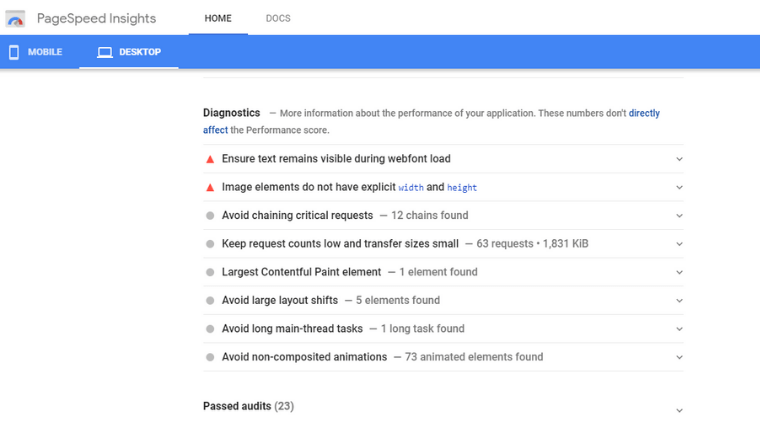

Google PageSpeed Insights Diagnostics

In addition to the opportunities, in the Google PageSpeed Insights interface, we also have a list of diagnostics. Diagnostics are more technical and come directly from Google Lighthouse.

Sometimes the tasks listed in diagnostics can give us a more detailed idea for troubleshooting and improving the score.

Something curious about the list of diagnoses is that they usually have a middle ground: either they are too technical or too obvious.

I am going to leave some, but I will try to explain the most common ones.

Make sure the text remains visible while the web font is loading

This is easy to fix. It usually appears when we optimize the loading of Google Fonts or other types of fonts loaded from third-party servers.

We simply have to configure the font display in SWAP.

Minimizes the work of the main thread

This is usually improved by optimizing the loading of Javascript, as it is mainly what overloads the visitor’s browser during the loading of the website.

There is nothing specific to improving this, but we will have to apply WPO techniques according to everything discussed in this article to improve the FCP and the LCP.

We can mitigate this diagnosis a bit by changing the loading of some scripts to asynchronous or delayed loading.

Publish static resources with an effective cache policy

To improve this, we only have to apply browser cache, something very simple and that may seem silly, but it helps a lot to load from the user’s point of view.

The problem is that, in most cases, this rule appears for third-party resources loaded from other servers over which we have no control. Therefore, we will not be able to do anything to fix it.

Reduce Javascript execution time

Like “Minimize Main Thread Work,” it is totally dependent on the WPO techniques used to improve FCP and LCP loading.

Reduce the impact of third-party code

We solve this by removing scripts loaded from third-party servers. In most cases, removing them is the only thing we can do. In other cases, we can hack scripts and cache them locally to do some actions or apply some WPO techniques on them, but not always.

Image elements do not have explicit width and height

This is exactly what is explained in the case of CLS: we must specify the dimensions of all images in the code to avoid losing points for the CLS.

If you want to know what the CLS is, see this same post a little higher.

Avoid excessive DOM size

Google PageSpeed and Google Lighthouse set a maximum of nested DOM elements as recommendable. When we work with themes or page builders with very complex structures, we can reach the maximum and even exceed it in length.

This tends to damage the FCP a bit, but it also doesn’t hurt the overall load speed. It does increase the consumption of the visitor’s browser when loading the HTML, and it can be as harmful as excess Javascript during loading if there is little power on the visitor’s device.

Conclusions on Core Web Vitals and Google PageSpeed

I don’t know what will happen in the future, but my advice is not to obsess.

When people lose, the problems begin, since they stop improving other parts of the web that could give them more benefits to focus on something that will bring little improvement in their business.

Finally, I want to add that PageSpeed is NOT WPO and that if you think this, you might be wrong. Although it is called PageSpeed, it is not a service or initiative geared towards loading speed. It’s as if I set up a bar and call it “The Funeral Home”: no people are buried; they only have names.

Read more: SEO-related Questions that Every Beginner Should Know (Most Important)

Read more: 20 Digital Marketing Tools To Start Online Business (2021)

Read more: How to Create a Blog for Free & Make It Successful 2021